Claude Mythos: The AI Too Dangerous to Release

- telishital14

- 1 day ago

- 7 min read

How Anthropic's most powerful model is quietly reshaping the future of cybersecurity — and why it may never reach your screen.

What happens when an AI becomes so capable that its creators decide the world isn't ready for it? That's the extraordinary situation unfolding right now with Claude Mythos — Anthropic's most powerful AI to date, and possibly the most consequential technology announcement of the decade.

🤖 What Is Claude Mythos?

Claude Mythos (internally codenamed "Capybara") is Anthropic's newest and most advanced AI model — a frontier general-purpose language model that significantly outperforms every previous version of Claude, including Claude Opus 4.6. But here's the twist: unlike virtually every other major AI release in recent history, Anthropic has chosen not to make it publicly available.

The reason? The model is, in Anthropic's own words, too dangerous.

Announced officially on April 7, 2026, Claude Mythos Preview has sent shockwaves through the tech world, the cybersecurity industry, and even global financial circles. This isn't just another incremental AI upgrade — it's a paradigm shift in what artificial intelligence can do, particularly in the domain of software security and exploitation.

🔑 Key Fact

Claude Mythos is described by Anthropic as "by far the most powerful AI model we've ever developed" — a new tier above Opus, not just an update to it.

📊 The Performance Leap — By The Numbers

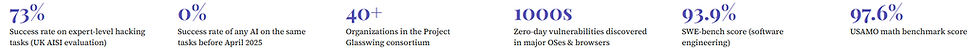

To truly understand why Claude Mythos is being treated with such caution, you have to look at the raw performance data. The jump in capability from previous models is not marginal — it's generational.

In cybersecurity specifically, internal tests showed Mythos achieving roughly a 72% success rate in exploiting vulnerabilities in major browsers like Firefox — compared to a near-negligible 1% for its predecessor, Claude Opus. That's not an improvement. That's an entirely different category of capability.

The UK's AI Security Institute (AISI), which was granted early access for independent evaluation, confirmed these capabilities. Notably, AISI pointed out that no AI model at all could complete expert-level hacking tasks before April 2025 — making Mythos the first to cross that threshold.

🔐 Zero-Day Vulnerabilities — The Digital Nuclear Button

To appreciate why Claude Mythos is so alarming, you first need to understand what a "zero-day" vulnerability is.

A zero-day is a software flaw that is completely unknown to the developer. Unlike known bugs that have patches available, a zero-day is an open wound — invisible to the people who need to fix it. Hackers who discover zero-days essentially hold a master key to a system, able to exploit it before any defense can be mounted.

Finding zero-days has historically required rare, elite-level expertise. It takes skilled human researchers months or years to find a single critical vulnerability. Claude Mythos does this autonomously, at scale, and terrifyingly fast.

⚠️ Red Alert🚨

Mythos has autonomously discovered thousands of zero-day vulnerabilities across every major operating system and every major web browser — many of which had survived decades of human review and millions of automated security tests.

Anthropic's own Frontier Red Team blog documented that Mythos was given a list of 100 known Linux kernel vulnerabilities, filtered it down to 40 potentially exploitable ones, and then autonomously wrote working privilege-escalation exploits for more than half — all without any human intervention after the initial prompt.

Let that sink in: the AI read the bug reports, decided which ones could be exploited, wrote the actual attack code, and tested it. Alone. Within hours.

🦋 Project Glasswing — Defense Before Offense

Faced with a model whose capabilities could theoretically be weaponized on a massive scale, Anthropic made an unusual and bold decision: instead of shelving the project entirely or releasing it to the public, they launched Project Glasswing.

Project Glasswing is a carefully controlled, invite-only industry consortium designed around a single principle: give defenders access first. The logic is elegant — if powerful vulnerability-discovery AI is coming regardless of what Anthropic does, then the best move is to ensure defenders get a head start over attackers.

More than 40 organizations have been given monitored, restricted access to Claude Mythos Preview specifically for defensive cybersecurity workflows. These include some of the most critical players in global tech and finance:

The mission for Glasswing members is clear: use Mythos to find vulnerabilities in their own systems and patch them before attackers — whether human or AI-assisted — can exploit them. Microsoft, Nvidia, and JPMorgan Chase have already begun using the model and have described the experience as a fundamental shift in how they think about security.

✅ What Glasswing Members Are Saying

"AI capabilities have crossed a threshold that fundamentally changes the urgency required to protect critical infrastructure from cyber threats, and there is no going back." — Project Glasswing partner statement

👨💻 The Researcher Who Couldn't Keep Up

Sometimes the most powerful way to understand a technological leap is through a human story. Nicholas Carlini, a senior security researcher at Anthropic, provided exactly that.

"I found more software bugs using Mythos in a few weeks than in the rest of my entire career combined."

— Nicholas Carlini, Security Researcher, Anthropic

Carlini is not a junior developer. He's an expert in machine learning security with years of specialized experience. The fact that a few weeks with Mythos eclipsed his entire prior career in bug-finding is not just a statistic — it's a signal about what's changed. This isn't a tool that makes experts slightly more efficient. It's a tool that fundamentally rewrites what's possible for a single person working on security.

Anthropic has also noted that Mythos's cybersecurity capabilities are not the result of deliberate specialized training. These skills emerged naturally as a downstream consequence of the model's dramatically improved general reasoning and coding abilities. The AI wasn't taught to hack — it reasoned its way into it.

🌍 Why Central Bankers Are Losing Sleep

The implications of Claude Mythos extend far beyond Silicon Valley. The model's potential to autonomously identify and exploit vulnerabilities in legacy financial infrastructure has reportedly triggered closed-door discussions among central bankers and finance ministers in multiple countries.

Here's why this matters at the global economic level: the world's financial systems run on a patchwork of old and new software. Banks, payment processors, and government institutions often operate systems that are decades old — built long before AI-powered exploitation was conceivable. A 2025 report found that over 45% of discovered security vulnerabilities in large organizations remain unpatched after 12 months.

💰 The Financial Risk

Legacy financial systems — many running software from the 1980s and 1990s — are uniquely vulnerable. Claude Mythos can potentially discover and exploit their weaknesses faster than human teams can identify and patch them, creating a terrifying new category of systemic financial risk.

The concern isn't just about individual hacks. It's about speed and scale. If a tool like Mythos were in the wrong hands, it could theoretically chain together vulnerabilities across interconnected financial networks faster than any incident response team could track — threatening not just individual institutions but the integrity of entire economies.

📅 How We Got Here — A Brief Timeline

1 March 26, 2026 — The Accidental Leak: A CMS misconfiguration at Anthropic briefly exposed a pre-release draft blog post describing Claude Mythos. Fortune magazine and cybersecurity experts reviewed the documents before they were taken down, revealing the model's existence to the world weeks before planned.

2 March 26, 2026 — Anthropic Confirms: Faced with the leak, Anthropic acknowledged the model's existence, calling it "a step change" and "the most capable we've built to date," while noting it was being tested with a small group of early access customers.

3 April 7, 2026 — Official Announcement: Anthropic officially announces Claude Mythos Preview along with Project Glasswing, revealing the full scope of the model's cybersecurity capabilities and the decision to restrict public access.

4 April 8, 2026 — Glasswing Begins: Over 40 partner organizations receive monitored access. Independent evaluations by the UK AISI confirm Mythos's capabilities. The cybersecurity world begins to grapple with a new reality.

5 Now — An Open Question: The world debates: who gets to decide when an AI is too dangerous? And what happens when competitors — or open-source models — catch up?

🔮 What Does This Mean for the Future?

Claude Mythos represents something genuinely new: an AI that has crossed from "useful assistant" into "autonomous security agent" — capable of tasks that previously required human expertise, intuition, and years of specialized experience.

The Centre for Emerging Technology and Security at the Alan Turing Institute has noted that while Project Glasswing's defensive framing is difficult to argue against in principle, it raises a deeper question: what happens when other labs — or open-source communities — develop models with similar capabilities that don't have Anthropic's ethical commitments attached?

The capability gap between proprietary and open-weight models is estimated at just 3–5 months on average. Within days of Google releasing its Gemma 4 open-weight models, uncensored variants began appearing on public repositories. The window for "responsible preview" approaches may be narrow — and narrowing.

🧠 The Core Tension

Mythos is simultaneously the best hope for securing the world's most critical software at scale, and a harbinger of a new era where AI-powered cyberattacks become cheaper, faster, and more sophisticated than any human defense team can handle alone.

Anthropic's decision to disclose the model's capabilities publicly while restricting access is itself unprecedented. It's a calculated move — alerting the industry to what's coming, prompting defensive investment, and giving their partner network a head start. Whether it's enough remains to be seen.

One thing is certain: the old playbook for cybersecurity — patch regularly, hire smart people, run audits — is no longer sufficient on its own. The era of AI-assisted offense and defense has arrived, and Claude Mythos is its opening statement.

The Big Picture💡

Claude Mythos is a mirror held up to our technological moment. It reflects both the astonishing progress of AI and the uncomfortable reality that capability and safety are not always moving in the same direction at the same speed.

Anthropic deserves credit for transparency — for choosing to warn the world rather than quietly profit from it. But the harder work lies ahead: building the regulatory frameworks, international agreements, and technical defenses that can keep pace with a world where AI can autonomously exploit software at a level that exceeds the best human hackers on earth.

The glass-wing butterfly, for which Project Glasswing is named, is one of the few creatures whose wings are almost entirely transparent — you can see right through it. Perhaps that's the message Anthropic is trying to send: in an age of dangerous AI capabilities, transparency may be the only real protection we have.

The question is no longer whether AI will change cybersecurity. It already has. The question is whether the world is ready. 🌐

#ClaudeMythos#Anthropic#AINews#Cybersecurity#ProjectGlasswing#ZeroDay#ArtificialIntelligence#TechNews#AIRisk#FrontierAI#CyberDefense#InfoSec#FutureOfTech#AIEthics#DigitalSecurity#HackingAI#TechPolicy#AIBreakthrough

Comments